When NVIDIA introduced its GeForce RTX 2000 series to the world in 2018, the graphics card market was shaken to its core. These RTX graphics cards revolutionized the gaming industry.

Although the concept of ray tracing had existed for a while before the official announcement by NVIDIA, we hadn’t previously seen any real ray tracing from a single graphics card.

This market-shifting move was bolstered by NVIDIA’s decision to release the finest representation of its revolutionary technology right away.

Several years on, we’ve seen NVIDIA release RTX 4080 and RTX 4090 as some of the highest-performing cards on the market. However, we do have to admit that, this time around, AMD finally put up a strong fight against NVIDIA with their RX 6000 Series GPUs.

However, this performance comes at a cost. Technology wasn’t the only thing being pushed to its complete utmost.

The largest source of anxiety toward a new GPU for NVIDIA’s latest generation of cards was the price. At the time, the best card available was the RTX 2080 Ti, which had an excessive cost that kept away even the most loyal NVIDIA fans.

However, NVIDIA sought to rectify this. With the release of the exceptional RTX 3080 at $700, we could finally enjoy a premium performance at a more affordable price. There are also the mid-range RTX 3070 at $500 and RTX 3060 Ti at $400.

In 2022, ray tracing became even more expensive with the release of NVIDIA’s RTX 4000 Series. The RTX 4080, for instance, got a 70% price increase ($1200) compared to the RTX 3080!

Table of ContentsShow

What You Get With RTX

Ray Tracing

Ray tracing wasn’t the only addition NVIDIA included, but it was certainly the most advertised feature.

At first, the more skeptical PC hardware enthusiasts were dubious about the idea and were quick to criticize and make memes mocking the technology as soon as it was announced.

They were somewhat accurate in their assessment that ray tracing would not (always) bring a vast improvement in the looks department. However, on closer inspection, even those steadfast in their beliefs had to admit that it brought visual refinements.

How It Works

In the past, we could see reflections and lighting effects that were implemented, but the truth is that those were part of an elaborate smoke and mirrors illusion. The static lighting effect would be hard-coded to show reflections and shadows that could look attractive, but it wasn’t the genuine deal.

The game developers had to devise ways to make their games look properly shaded and illuminated using these tricks. The fact that they often did so successfully is a testament to their imaginative and clever approaches.

RTX brings true real-time photon particle emulation to the table. The in-game world is rendered dynamically, allowing for far more lifelike and immersive visuals.

These visual effects can now be rendered so precisely that we’re slowly but steadily moving towards lifelike video game graphics.

When light particles and reflections are calculated with RTX, the engine considers the external substance that light is bouncing from.

For example, light reflection is rendered differently if the reflecting surface is water, as opposed to glass. Similarly, the light will appear unique when hitting a marble floor or sand.

Below is a tech demo for ray tracing capabilities, showcased for Battlefield V at CES 2019.

Interestingly, RTX wasn’t the first technology to provide its audience with the magic of ray tracing. In fact, most modern movies with costly CGI effects feature ray tracing.

Although The Compleat Angler from 1979, which was produced by Bell Labs engineer Turner Whitted, is credited as the first use of ray tracing, it wasn’t until Pixar’s Monsters University that the technology was fully embraced in 2013.

**So how come Pixar did it in 2013, and players had to wait until 2018?**

Prerendered ray tracing vs. real-time ray tracing

The answer is straightforward. What Pixar did is entirely different from the ray tracing we see in games. Pixar (or any other CGI animation studio) can prerender every individual scene, which can take hours, weeks, or even months to compute.

Once all those scenes are prepared, they can be assembled together and turned into a film such as Monsters University.

In contrast, visuals in video games with ray tracing are rendered in real time. RTX 2000, 3000, and RX 6000 GPUs process ray tracing continuously, which is why it has such a substantial impact on in-game performance. In games such as Metro: Exodus, the typical FPS could drop by 40% or more and might cause stuttering.

We also need to consider that ray tracing in video games is basic compared to some ray-traced scenes in animated films. An RTX 3000 or RX 6000 GPU would need months to process a complex ray-traced scene. It would be impractical to do it in real time.

The manner scenes are ray traced with RTX GPUs is also notably different compared to animated movie scenes.

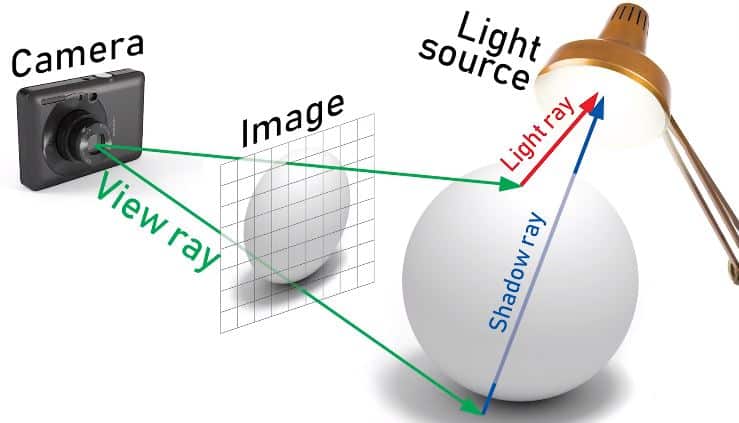

The camera of the player will follow a path through one pixel to find the object behind it and to the source of light. Ray tracing also takes into account whether the object’s exposure to light is slightly altered or totally blocked. Here is a helpful depiction of how it works.

This is accomplished using a bounding volume hierarchy traversal which, as the name suggests, is an algorithm for traversing a BVH tree structure. Although this significantly reduces the computational requirement, there is still a very obvious excess.

GPUs that don’t have additional ray tracing hardware would be required to use shaders, which would create a substantial bottleneck.

Enter RT cores.

NVIDIA’s direct solution to that additional computational requirement is to assign dedicated cores to those calculations. The RT cores hold two separate units where one handles the bounding box tests, and the other performs ray-triangle intersection tests. This significantly reduces the strain on the GPU and allows it to perform other tasks more efficiently.

Deep Learning Super Sampling – DLSS

NVIDIA introduced several improvements for AI calculations with its RTX cards, but the most significant use can be seen with DLSS.

DLSS is a machine learning-based upscaling technology that uses RTX’s Tensor Cores. The initial version of DLSS brought extra FPS in games, but at a cost of image quality, flickering, ghosting, and more.

But, with time, NVIDIA is consistently enhancing this feature, improving in all aspects, especially in graphic quality.

Frame Generation

The most recent major update is DLSS 3.0 or Frame Generation, which is only accessible to RTX 4000 Series GPUs.

Frame Generation can enhance in-game speed by creating extra frames using AI, making ray tracing more accessible.

DLSS 3.0 is still in its early stages, so there aren’t numerous games that support it. But, we’re sure it will become a lot more widespread and all future RTX GPUs will benefit from it.

Don’t worry though, NVIDIA’s upcoming updates on DLSS (excluding Frame Generation) will be available to previous RTX generations.

Big Tech Equals Big Price

Despite what the internet might say about it, there’s nothing inappropriate with acknowledging how exceptional NVIDIA’s technology is in their RTX series.

What isn’t so remarkable is that ray tracing is becoming more and more demanding, so getting a decent framerate is difficult, even for the finest GPUs out there.

On a more positive note, the industry is deeply impressed by the tech of ray tracing and DLSS. As time passes, we’re likely to see this tech being utilized to its full potential more frequently.

As NVIDIA is sitting securely on its GPU throne, they have the power to dictate the price points for its cards. When they introduce the gaming world to groundbreaking technology such as real-time ray tracing, they can’t be blamed for taking advantage and testing the boundaries of their consumers’ wallets.

One might look at the RTX 4090 and its $1600 cost and judge it as even more expensive than the RTX 3090. And its younger sibling, the RTX 4080, is now more expensive than all of its predecessors at $1200.

We have yet to see the performance and pricing of mid-range GPUs like the RTX 4060 or RTX 4070, but we presume they’ll end up costlier than previous generations.

Even AMD is taking a similar approach to NVIDIA with their RDNA 3 GPUs. The high-end GPUs, RX 7900 XT and RX 7900 XTX are priced $900 and $1000, respectively.

On release, RTX might have been above the anticipated and comfortable price range, but RTX 3000 found its footing in terms of both performance and cost. However, NVIDIA worsened things with the RTX 4000 Series, making this one of the most costly GPU generations in history.

Still, considering the DLLS technology and the clear improvement of ray tracing, we can say they are certainly worth the money.